When a fintech client lost 60% of their organic traffic overnight, the panic was real. What followed was a forced audit that produced a 42% traffic lift, and a repeatable framework for doing the same thing without the penalty.

A manual penalty from Google is not an algorithm fluctuation you can wait out. It is a human reviewer at Google deciding your site does not deserve to rank.

Most agencies treat recovery as damage control. We treated it as the most thorough site review Google would ever give us, and we made sure the site they saw was worth rewarding.

This is the account of what happened, what we did differently, and why the same logic applies even if your site has never seen a penalty notification in Search Console.

How to Check If Your Site Has a Google Penalty

Before any recovery work can begin, you need to confirm what you are dealing with.

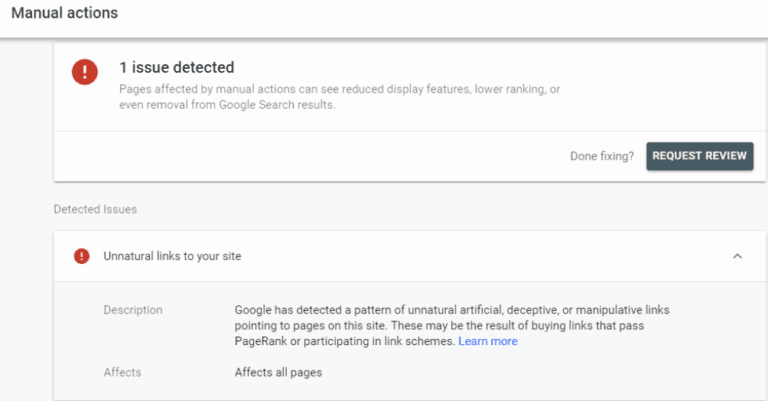

Open Google Search Console and navigate to Security and Manual Actions > Manual Actions. If a manual action exists, it will be listed here with the penalty type and the pages affected. If this section shows "No issues detected," you do not have a manual penalty.

If you have experienced a sudden traffic drop without a Search Console notification, you are likely dealing with an algorithmic penalty — a ranking adjustment from a core update, the Helpful Content system, or Penguin. These require a fundamentally different recovery approach: substantive content and quality improvements rather than a reconsideration request.

The distinction matters because many site owners submit reconsideration requests for algorithmic drops, wait weeks for a response that never comes, and miss the actual fix window.

The Situation: A Good Week That Fell Apart

The client was a fintech startup operating in the unlisted shares space. We'll call them FinX. After months of steady SEO work, they were seeing consistent organic growth, and one Tuesday morning, the account manager forwarded a client email: traffic was up 22% week over week.

Forty-eight hours later, I logged into Search Console and saw this:

Google Search Console Notification

Manual Action: Unnatural Links to Your Site. Some of your pages may not be appearing on Google.

Organic visibility had dropped over 60% within hours of the notification. The account was worth approximately $30,000 per month in retained revenue. And the timing, immediately after a positive client check-in, made it worse.

This was not an algorithm update. It was a targeted decision by a human reviewer at Google who had examined the backlink profile and found it manipulative.

The distinction matters because the recovery process is entirely different and, as it turned out, significantly more revealing.

What Caused It: The Overengineered Links Problem

Earlier that month, the team had placed three off-site articles with backlinks pointing to FinX's domain. The sites themselves were clean. The content was relevant to the fintech niche. None of it was obviously spammy.

The problem was the pattern. All three articles had exact-match anchor text targeting commercial keywords, with no variation across placements, no natural branded anchors, and minimal contextual distinction between them. To a human reviewer, it read like a coordinated attempt to manipulate rankings. Because it was, even if the intent was standard link-building practice.

Why This Triggers a Manual Review

Google's webspam team looks at link patterns across a domain's entire backlink profile.

A cluster of links that all point to the same pages, with the same anchor text, from sites published in the same window, is one of the clearer signals of orchestrated link building. It does not matter that the individual links were placed on legitimate sites.

The deeper audit also surfaced older problems: a legacy of low-quality directory submissions, a handful of forum profiles with links, and two or three guest posts from an earlier vendor that were borderline at best. The three recent articles were the trigger, but they dropped into a profile that was already carrying weight it did not need to carry.

The Standard Recovery Playbook (And Why We Went Further)

The textbook response to a manual penalty is straightforward:

Step 1: Audit the entire backlink profile. Flag every link that is low-quality, irrelevant, or manipulative.

Step 2: Attempt link removal outreach. Document the outreach, even when sites do not respond, which most will not.

Step 3: Upload a disavow file to Google Search Console for any links you cannot have removed. The disavow file is a plain text document listing domains or URLs you want Google to ignore. Format each domain line as domain:example.com. Be conservative — disavowing legitimate links by mistake hurts your rankings.

Step 4: Submit a reconsideration request with documentation of what you found, what you removed, and what you disavowed.

Step 5: Wait. Google gives no timeline. Responses typically arrive between two and six weeks.

We did all of this. But at the point of drafting the reconsideration request, we stopped and reconsidered the document's purpose.

Most reconsideration requests are confessions. They explain what went wrong and promise it will not happen again. That is the minimum required to get the penalty lifted. It is also the ceiling of what most teams aim for.

A reconsideration request is one of the only moments where you know a real person at Google is looking at your site. The question is whether you treat that as a formality or a presentation.

We withdrew our initial draft and started again.

Turning the Request Into a Site Review

The insight was simple: when a manual reviewer evaluates your reconsideration request, they are not just checking whether the bad links are gone. They are looking at the site. They are deciding whether it is worthy of ranking.

Every signal they encounter during that review either supports or undermines your case.

We used the two weeks before resubmission to make changes we had been planning but deprioritizing, and then built the evidence directly into the request document.

Content Quality Documentation

We identified fifteen cornerstone articles covering FinX's core financial topics and ran a substantive update on each one: verified statistics, expanded explanations, improved structure, and updated metadata.

We attached before-and-after screenshots to the reconsideration request for six of these, with links to the live pages.

The framing in the request was not "we improved our content." It was: "These pages represent the depth of expertise the site brings to users researching unlisted shares. The penalty is currently limiting access to genuinely helpful content."

Technical Evidence

We fixed a set of Core Web Vitals issues that had been on the backlog for two months. Largest Contentful Paint on the key landing pages dropped by 40%, which we documented with before-and-after Lighthouse reports. We also resolved 38 internal 404s, rewrote duplicate meta titles across category pages, and cleaned up a crawl bloat issue affecting the blog archive.

None of these were penalty-related. All of them were signals that the site was well-maintained and technically sound.

E-E-A-T Reinforcement

FinX's author pages were thin. Expert bios existed but lacked credentials, publication history, and professional context. We rewrote them with meaningful depth and added an editorial process page outlining how the site approaches financial content, including sourcing standards and review procedures.

For a fintech site operating in a YMYL category, this is not optional. Google's quality raters use authoritativeness and trustworthiness signals to evaluate sites in financial, health, and legal verticals. Having them underdeveloped was a liability regardless of the penalty.

Proactive Link Quality Evidence

Instead of only listing the links we removed, we also showed what good links looked like.

We included five examples that the site had legitimately earned in the previous quarter. Each example explained how the link was acquired. It also noted the publication's domain authority and its topical relevance.

The framing was: "This is what our link-building approach looks like now. Here is evidence it is already working."

Is your reconsideration request strong enough to get approved? If you are managing a site that has received a manual action, request a review of your current documentation before submitting — a weak request delays recovery by weeks. Book a free strategy call →

The Results (And What They Actually Mean)

Ten days after resubmitting, the Search Console notification read:

"We reviewed your site and found that the manual action we took is now revoked."

Traffic recovery began within 48 hours. By the end of the week, FinX's organic sessions had surpassed pre-penalty levels. By the end of the month, the numbers were 42% higher than they were before the penalty hit.

| Metric | Pre-Penalty | Post-Recovery | Change |

|---|---|---|---|

| Organic sessions (monthly) | Baseline | +42% vs. baseline | +42% |

| Rankings on target keywords | Positions 4–9 | Positions 2–6 | +2–3 positions avg. |

| New keyword rankings (untracked) | - | ~40 additional terms | Net new coverage |

| Core Web Vitals (LCP, key pages) | Failing | Passing | -40% LCP |

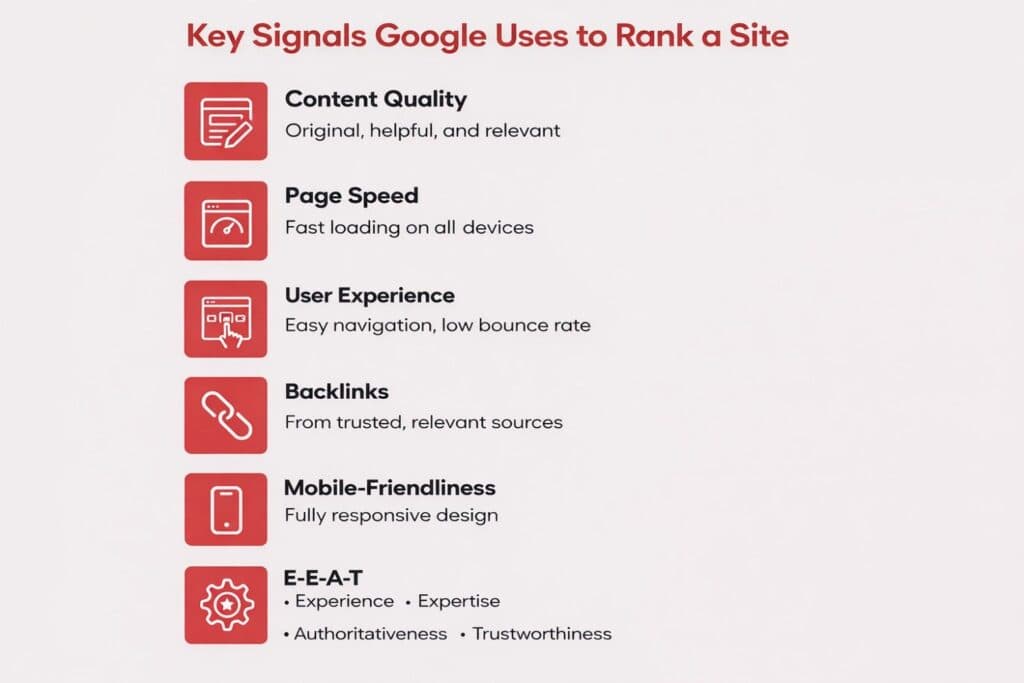

The uplift came from two compounding effects. First, the penalty removal restored the domain's ability to rank. Second, the improvements made during the recovery period — content quality, technical performance, and E-E-A-T signals — meant the site Google re-evaluated was materially better than the one it had penalized. The recrawl that accompanied the penalty removal encountered a site that had been optimized specifically for what reviewers and quality raters assess.

That is not luck. It is the difference between treating recovery as a defensive task and treating it as an offensive opportunity.

The Framework: Applying This Without a Penalty

The mechanics of what happened here are available to any site, at any time. A manual penalty creates urgency that forces the work. For most sites, the same work sits in a backlog of "important but not urgent" items that never get properly addressed.

Here is how to replicate the outcome without waiting for a crisis.

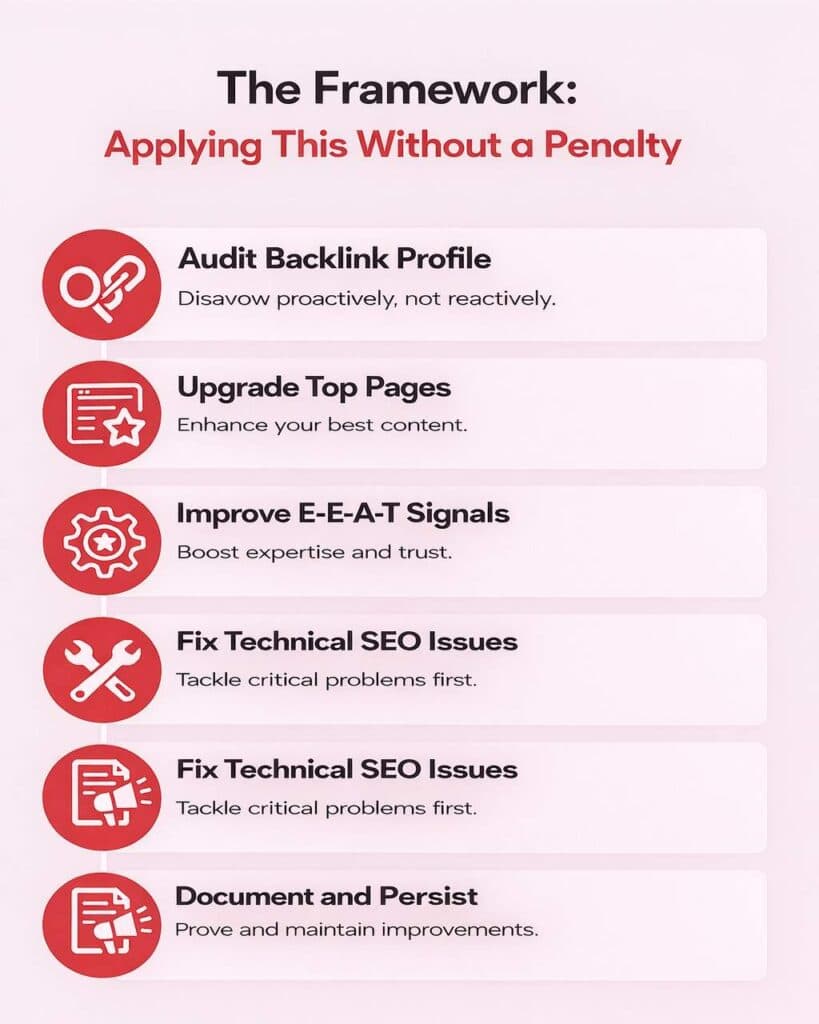

01 Audit your backlink profile as though you just received a manual action

A thorough SEO audit services review starts with your backlink profile. Pull your full link profile and look for patterns rather than individual links:

- anchor text concentration,

- geographic clustering of referring domains,

- publication dates that suggest coordinated placement, and

- link velocity spikes that do not correspond to content releases.

Disavow proactively, not reactively. Most sites carrying manual-penalty-level risk have not received one yet. For a practical example of how proactive backlink monitoring creates opportunity rather than just damage limitation, see how broken link building turns competitor link decay into acquisition advantage.

02 Identify your ten highest-value pages and treat them like they are about to be reviewed

Not reviewed by an algorithm. Reviewed by a person who is deciding whether your site should rank.

What would a quality rater conclude about the depth, accuracy, and trustworthiness of your content? Update statistics, expand thin sections, and remove or consolidate pages that provide no differentiated value. For guidance on what genuinely differentiated content looks like, the post on making content stand out in the AI era covers the same quality signals Google's helpful content system evaluates.

03 Close the E-E-A-T gaps that are most common and most damaging

Author bios with no credentials, About pages that read like marketing copy, editorial process pages that do not exist. These are particularly consequential in YMYL verticals, but they affect every industry.

Google's quality guidelines are public. Read them and then look at your site honestly.

04 Fix the technical issues that have been on the backlog

Core Web Vitals failures, crawl budget waste, internal 404s, and duplicate meta tags rarely trigger penalties. But they quietly suppress organic performance. Pages that should rank struggle to gain visibility.

A clean technical foundation changes that. It ensures the positive signals you have built are actually discovered, interpreted, and weighted correctly by search engines.

05 Document what you have built and treat it as a persistent signal

The reconsideration request succeeded because we had proof — screenshots, data exports, and links to the improved pages.

Use structured data, schema markup, author credentials, and strong internal linking. These signals help Google understand what your site is and why it deserves to rank.

What This Actually Cost (And What It Returned)

The work described above took approximately three weeks of focused effort across an account manager, a technical SEO specialist, and two content editors. The client saw a 60% traffic loss that lasted roughly 25 days before full recovery, which, at their then-current traffic monetization translated to meaningful lead pipeline disruption.

By month two post-recovery, organic leads were running 35% above the pre-penalty average. The improvements to content quality and E-E-A-T have continued to compound over the subsequent months, with no sign of diminishing returns.

The honest trade-off: a penalty recovery under time pressure with a client relationship on the line is a brutal way to fund a site improvement sprint. The same work done proactively, without crisis-driven urgency, would have produced the same organic uplift with none of the pipeline disruption. That is the lesson worth internalizing.

The Compounding Case for Proactive Quality Work

Sites that meet Google's quality benchmarks before there is pressure to meet them tend to perform more consistently across algorithm updates. The brands that gain market share after core updates are, almost without exception, the ones that were already operating closer to Google's quality standards than their competitors. At scale, enterprise SEO programs that build proactive quality processes across large site architectures are substantially better positioned to absorb algorithm updates without the traffic disruption that reactive teams face.

A Note on Reconsideration Requests for Sites With Legacy Actions

If your site received a manual action in the past and you resolved it through standard recovery at the time, the process described here is still available to you.

If your site has improved materially since that recovery — particularly around content quality, E-E-A-T, and technical performance — a new reconsideration request documenting those improvements can prompt a fresh review of the domain.

This should not be submitted casually or speculatively. Do the work first. Then document it thoroughly. The request should read like a case for the site's organic merit, not a petition for sympathy.

Frequently Asked Questions

What should I include in a Google reconsideration request to maximize approval chances?

A strong request goes beyond listing removed links. Include documented outreach evidence (emails sent, dates, responses received), the disavow file you submitted, and before-and-after evidence of site improvements — content updates, technical fixes, E-E-A-T signals. Frame the document as a case for why the site deserves to rank, not merely an apology for past practices. Screenshots, Lighthouse reports, and links to improved pages carry more weight than written assertions alone.

How do I create a disavow file and what format does Google require?

A disavow file is a plain text (.txt) file. Each line lists either a specific URL (https://example.com/page) or a full domain using the format domain:example.com. Upload it through the Google Search Console Disavow Tool. Only include links you genuinely cannot remove through outreach — and be conservative. Disavowing legitimate links by mistake can suppress your own rankings.

Can a Google core algorithm update cause a traffic drop similar to a manual penalty?

Yes. Core updates — including the Helpful Content system and Penguin — produce sudden, significant traffic drops that look identical to manual penalties in analytics. The key diagnostic: check Search Console's Manual Actions section. If it shows "No issues detected," you are dealing with an algorithmic signal, not a manual review. Recovery requires improving content quality and E-E-A-T over time, not a reconsideration request.

Should I remove bad links or disavow them, and what is the difference?

Both. First, attempt removal through outreach — contact the site owner and request removal. If the site does not respond within 2 weeks, or the link cannot be removed, disavow the domain. Document all outreach attempts even when unsuccessful, as this documentation strengthens your reconsideration request. Disavowal without attempted removal first is a weaker signal to Google's reviewers.

How do I proactively protect my site from future Google penalties?

Audit your backlink profile quarterly using Ahrefs or Semrush, looking for anchor text concentration, unnatural link velocity, and low-relevance referring domains. Maintain E-E-A-T signals year-round — genuine author credentials, editorial process pages, and factually current content. Build links only through tactics that create something worth linking to: original research, guest contributions on relevant publications, and earned media. The manual penalty risk profile drops significantly when your link acquisition mirrors how a well-regarded editorial publication builds its own link profile.

Key Takeaways

The $30,000 monthly account at risk recovered fully. The client's organic traffic is now running at a higher baseline than before the penalty hit.

The improvements made under duress have become part of the standard playbook for how the team approaches account maintenance. None of that was guaranteed when the penalty notification appeared.

What made the difference was the decision to treat a mandatory review as an opportunity rather than a formality. That decision cost three weeks of intensive work. It has paid out for considerably longer.

The framework is not penalty-specific. Treat your most important pages as though a quality rater is about to review them, audit your backlink profile as though an enforcement notice just arrived, and document the strength of what you have built. That is proactive quality work. It is also how you make a penalty, should one ever arrive, into an accelerant rather than a setback.

If you are managing a site with a manual action, a history of aggressive link building, or organic performance that has plateaued without an obvious explanation, we can tell you what we see and what we would do about it. Book a free site review →

Aditya Kathotia

Founder & CEO

CEO of Nico Digital and founder of Digital Polo, Aditya Kathotia is a trailblazer in digital marketing. He's powered 500+ brands through transformative strategies, enabling clients worldwide to grow revenue exponentially. Aditya's work has been featured on Entrepreneur, Economic Times, Hubspot, Business.com, Clutch, and more. Join Aditya Kathotia's orbit on LinkedIn to gain exclusive access to his treasure trove of niche-specific marketing secrets and insights.